Member Insights

From AI promise to AI economics: why MoDaaS is a turning point for AI-native telcos

Vikas Kumar, Principal Global Enterprise Architect at Dell Technologies, discusses how AI in telecom will not fail due to lack of intelligence, but due to the inability to scale it sustainably.

From AI promise to AI economics: why MoDaaS is a turning point for AI-native telcos

Introduction: AI needs an operating model, not just ambition

AI is becoming foundational to how networks are built, operated, and optimised. The real challenge is no longer proving its value — it is scaling it across domains without losing control of cost, performance, and architectural choice.

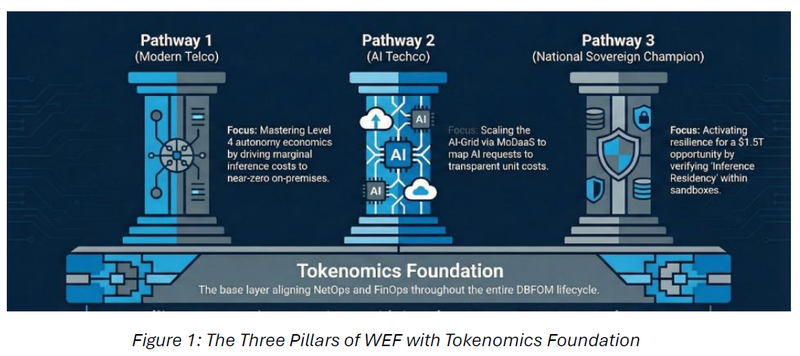

The World Economic Forum's 2026 (WEF) pathways show how this shift can work for telcos:

- Modern Telco — modernising the core connectivity business

- AI Techco — moving into AI-led managed services

- National Sovereign Champion — becoming sovereign infrastructure provider

Each looks different. But all depend on solving the same problem: AI economics at scale.

TheTM Forum MoDaaS Blueprint GB1085 — co-chaired by Dell Technologies, Telstra, and PwC — addresses this directly, shifting the focus from deploying models to governing and consuming AI efficiently across the full network estate.

Without that economic clarity, AI-driven autonomy is not a strategy. It is operational risk.

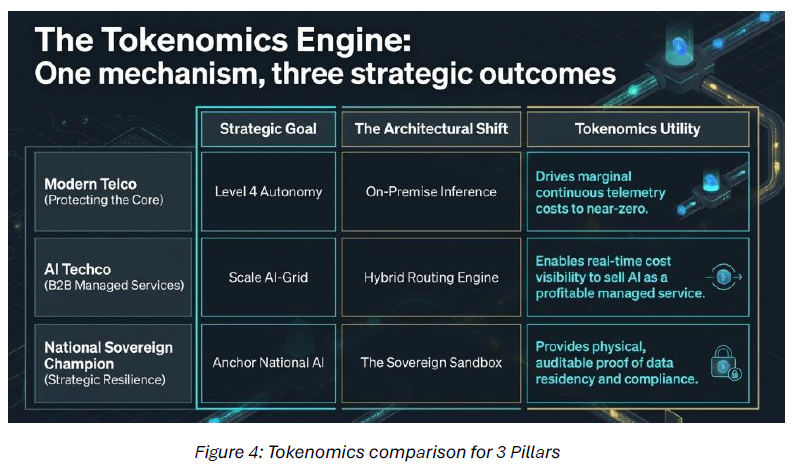

The modern telco — mastering the economics of autonomy

The WEF’s first pathway: telcos must protect and modernise core connectivity. In 2026, “modernise” effectively means Level 4 autonomy – self-healing, self-optimising networks that rebalance domains (example RAN), isolate faults and manage power in milliseconds, not minutes. TM Forum’s latest research shows this is already happening in live domains, not on slides. For every operator, Level 4 has moved from vision statement to competitive baseline.

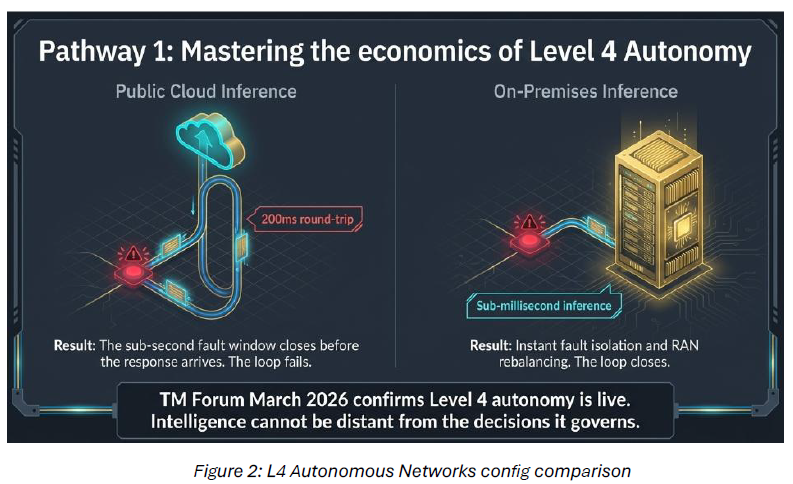

That shift exposes two hard constraints for all telcos: latency and tokenomics. If the brain of an autonomous network sits in distant hyperscale regions, every control loop inherits that distance. A 200 ms round trip to the cloud cannot close a sub-second fault. Intelligence has to live where decisions are executed – inference on-prem and at the edge, close to the data and the actuators.

Economically, Level 4 turns inference into a continuous utility. Every network element is streaming telemetry through prediction and optimisation loops that never stop. At that scale, per-token cloud

pricing becomes a structural margin leak. TM Forum’s MoDaaS Blueprint treats the token as the atomic unit of AI economics: push high-volume, routine inference onto on-prem infrastructure, drive marginal cost toward near-zero once CapEx is amortised, and ensure autonomy expands margin instead of silently compressing it.

The AI techco — scaling the AI-grid via MoDaaS

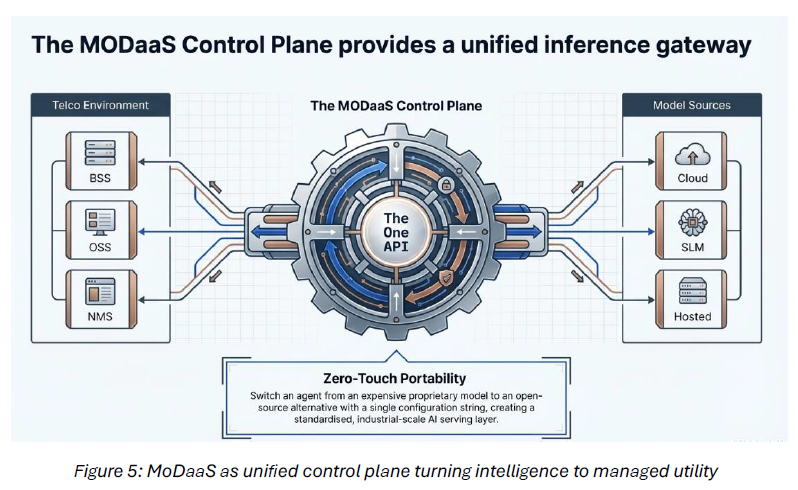

WEF’s second pathway pushes telcos beyond connectivity into AI-powered services. Here, the AI-Grid becomes the core design pattern: a distributed layer of compute and intelligence that behaves like a utility — training where scale exists, inferring where decisions happen, with governance spanning the whole mesh. Getting there means moving past today’s scattered, ungoverned AI pilots. That is exactly what MoDaaS is designed to fix.

MoDaaS is the operating system of the AI Techco. It gives operators a single platform to host and orchestrate many model types — LLMs, SLMs, and domain models — under one policy and routing plane. A hybrid engine sends bursty, non-sensitive workloads to cloud while keeping critical enterprise data and inference on-prem. Crucially, MoDaaS prices every AI request in real time, so telcos can sell “intelligence as a managed service” with the same SLAs, predictability and transparency they once applied to bandwidth.

The national sovereign champion — The on-premises mandate

The most significant revenue opportunity identified by the WEF is the National Sovereign Champion. With $1.5 trillion in projected global spending on sovereign AI by 2028, governments are actively seeking partners who can anchor national AI stacks — not just participate in them.

A hyperscaler's regional availability zone does not satisfy a sovereign contract. True sovereignty requires on-premises compute. Dell Technologies’ role in this architecture is to provide the Sovereign Sandbox — the physical, secure, and high-performance foundation that makes the sovereign promise real and auditable. When an operator runs MoDaaS on infrastructure they own, they can prove to a regulator that not a single token of sensitive national data ever left the country.

That capability transforms a telco from a regulated utility into a strategic national asset. The contracts that follow are long-term, premium, and structurally different from anything the connectivity business has historically produced.

Architectural conclusion

Whether the goal is to be a Modern Telco optimising operational margins, an AI Techco scaling managed services for enterprise clients, or a National Sovereign Champion anchoring a country's AI infrastructure — the architectural answer is the same.

Intelligence requires gravity.

Bring the intelligence on premises. Govern it through the MoDaaS framework. Make the economics visible through tokenomics.

The MoDaaS blueprint introduces a control layer that helps operators manage AI at scale, regardless of where it runs. This is achieved through an Operational Interaction Flow that ensures every AI request is governed and optimized.

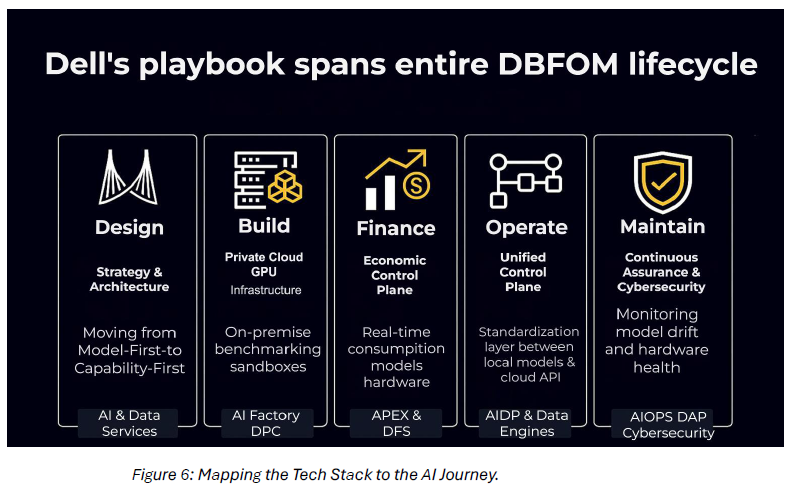

Execution: the strategic playbook across the DBFOM lifecycle

The transition to AI-native operations requires a partner who can support the entire Design, Build, Finance, Operate, and Maintain (DBFOM) lifecycle.

Conclusion: autonomy requires economic clarity

Autonomous networks will not be built on opaque cost models. They will be built by operators who understand, at a granular level, how intelligence is produced and consumed. The call to action is clear: Treat AI inferencing as a governed service, embed cost transparency into your AIOps design, and insist on architectures that preserve flexibility. Those who master the economics of AI today will operate with confidence tomorrow.

References:

Assessing CSPs' progress towards Level 4 autonomous networks